Category

OpenClaw Docker: Deploying Private AI Infrastructure

Last updated: May 2026

Most IT Directors think standing up a private AI agent is a weekend project. They run a docker-compose up command, watch the logs turn green, and declare victory. They are wrong. A single container running locally is a toy; an orchestrated, secure deployment is a workforce.

We have spent the last three years deploying private AI systems for mid-market operators, and the hardest part is never the initial code—it is establishing the data boundaries. OpenClaw Docker deployment is the process of containerizing the OpenClaw agent operating system on your private infrastructure to run autonomous workflows without leaking company data to public models.

- What it is: A containerized, production-ready deployment of the OpenClaw agent OS for private infrastructure.

- Core Containers: Node (the engine), Workspaces (isolated volumes), and Browser Relays (secure web navigation).

- Security Posture: Runs 100 percent on-premise or in your VPC, ensuring zero data leakage.

- First Step: Map your departmental workflows and access permissions before writing any Docker Compose files.

Why You Cannot Just "Docker Run" an AI Workforce

If you treat an OpenClaw setup like a standard web application, you will build a massive security liability. Your developers are going to want to build the underlying architecture from scratch or rely on a simple default configuration. Do not let them. They will spend six months building a fragile queueing system instead of solving business problems.

When you deploy an AI agent, you are giving a language model the ability to read files, execute commands, and navigate web portals. In a local development environment, running a single Docker container with root access to your host machine might be acceptable for testing. In production, that same configuration means a hallucinating agent could accidentally rewrite your entire database.

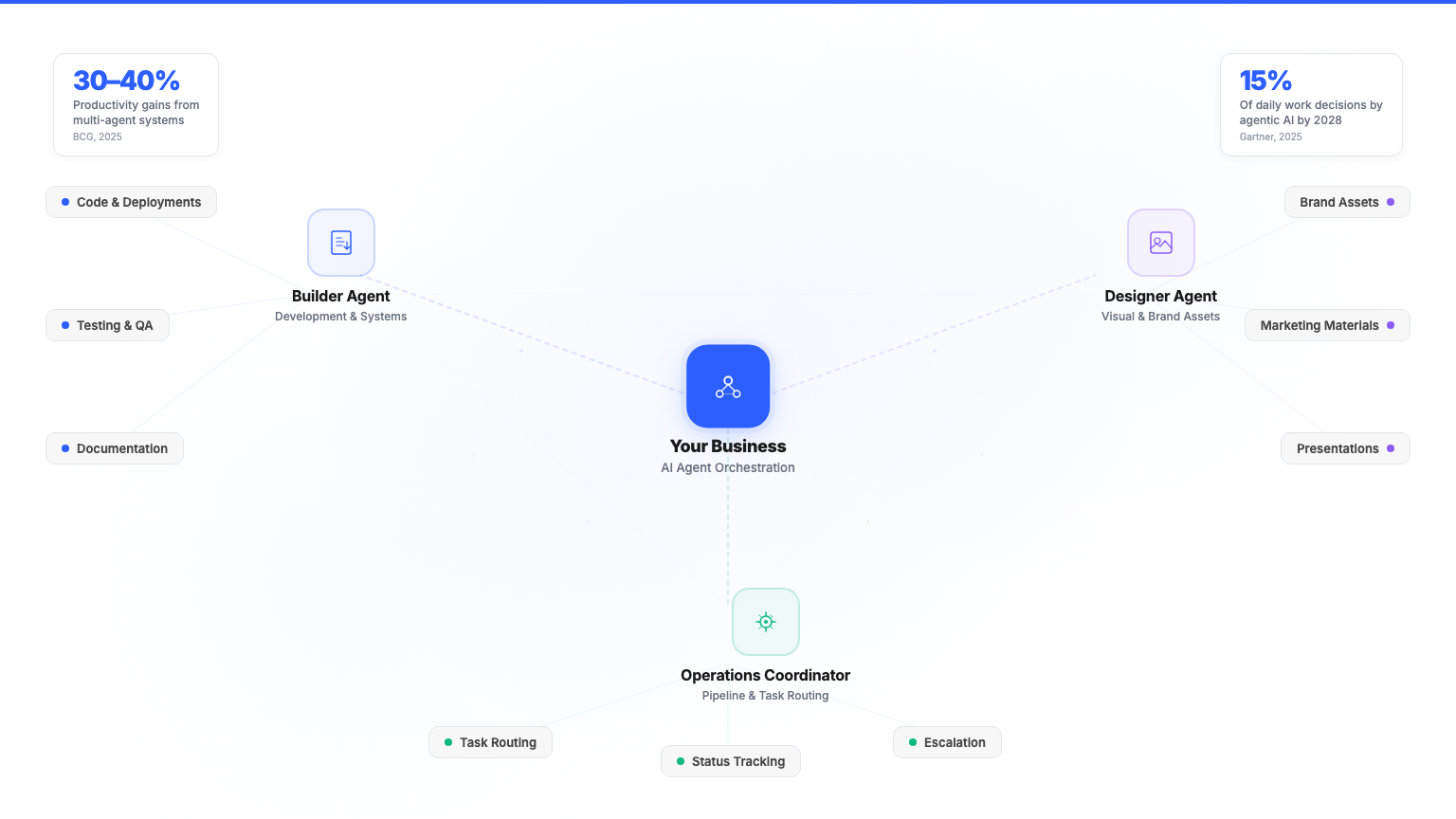

A true Private AI Workforce requires architectural isolation. You must separate the engine executing the logic from the environments holding the data. You must containerize the web browsers the agents use to prevent host network compromise. This level of governance requires a purposeful Docker architecture, not just a quick pull from a public registry.

The OpenClaw Docker Architecture

An enterprise-grade OpenClaw deployment relies on container orchestration to manage three distinct components. Whether you use Docker Swarm, Kubernetes, or a robust docker-compose stack, the underlying logic remains the same: isolate, monitor, and execute.

The Node: The Engine and Queue

The Node is the central orchestrator. It runs in its own container and handles all incoming API requests, manages the background job queue, and routes tasks to the correct local language models (like Llama 3 or Mistral).

Think of the Node container as the dispatcher. It does not store long-term business data itself; instead, it connects to a containerized PostgreSQL database for state management and a Redis container for queueing. Because the Node sits entirely behind your firewall, it can safely orchestrate complex, multi-step operations without sending your proprietary prompts to a public cloud provider.

Workspaces: Isolated Docker Volumes for Strict RBAC

This is where data sovereignty is won or lost. You do not want the HR agent, which is parsing resumes and salary data, to have access to the same file paths as the Finance agent handling vendor invoices.

OpenClaw solves this by mapping Workspaces to isolated Docker volumes. A Workspace provides a strict context environment. When an agent spins up to execute a task, it is mounted into a specific Docker volume that contains only the credentials, context files, and API keys required for that specific role. This physical separation at the container level enforces strict Role-Based Access Control (RBAC). If an agent fails, the blast radius is contained entirely within its dedicated volume.

Browser Relays: Containerized Web Navigation

Agents need to interact with web-based software, especially legacy SaaS tools that lack modern APIs. OpenClaw uses Browser Control Relays to navigate these interfaces, extract data, or click buttons.

Running a browser controlled by an AI agent directly on your host network is incredibly dangerous. In an enterprise Docker deployment, the Browser Relay runs in its own isolated container, on its own virtual network. It acts as the secure "hands" of the agent, allowing it to perform visual navigation and scrape on-screen data while remaining entirely walled off from your internal corporate network.

Stop Guessing on AI Strategy

Book your Free AI Agent Strategy Session ($10K value). Walk away with your 30-day wins, top 3 custom agents, and a 12-month scale plan.

Securing Your Containerized Agents

Once the containers are running, the focus shifts to governance. Here is the blunt truth: software breaks when SaaS vendors update a button layout by three pixels. Unmanaged agents will stall, hallucinate, or fail silently.

For example, when your automated invoice processing agent gets confused by a new PDF layout from your primary steel vendor, what happens? If your Docker environment lacks proper audit logging and human-in-the-loop approval gates, that invoice gets dropped. A secure deployment routes that failure to a human manager in Slack, providing the exact logs from the Node container to diagnose the issue.

Security also means network isolation. Your OpenClaw Node container should have outbound access to your local LLM inference server, but the Browser Relay container should only have outbound access to the specific SaaS domains it is authorized to navigate. Restricting container network bridges prevents unauthorized lateral movement within your infrastructure.

The Difference Between Code and a Managed Workforce

Deploying the OpenClaw Docker containers takes an afternoon. Managing the resulting AI workforce takes continuous oversight.

Mid-market operators cannot afford to pull their senior engineers off core product development to babysit a fleet of AI agents. Managing the Node updates, refining the Workspace volume permissions, and patching the Browser Relays requires dedicated operational focus. This is why attempting to self-manage an enterprise AI deployment often ends in frustration and abandoned infrastructure.

Building the technical foundation is only step one. The real value comes from mapping the business logic, establishing the approval gates, and maintaining the system as your company scales.

Don't Build Without a Blueprint

Map your path from chatbots to a Private AI Workforce. Book your Free AI Agent Strategy Session today.

Frequently Asked Questions

Can I run OpenClaw using docker-compose?

Yes. A docker-compose file is the standard way to orchestrate the OpenClaw Node, the required PostgreSQL database, and the Redis queue on a single host machine or private server.

Do Workspaces require separate containers?

Workspaces themselves are not separate execution containers. They are isolated Docker volumes that mount into the execution environment, ensuring data boundaries remain strict between different agent roles.

How do Browser Relays communicate with the Node?

The Browser Relay container communicates with the Node via an internal Docker network bridge. This allows the Node to send navigation commands securely without exposing the Browser Relay to the host machine's external network.

Is public internet access required for an OpenClaw Docker deployment?

No. If you are using local language models (like Llama 3) running on your own hardware, the entire OpenClaw Docker stack can operate on a completely air-gapped or private VPC network with zero public internet access.